Python is the language of choice for most of the data science community. This article is a road map to learning Python for Data Science. It’s suitable for starting data scientists and for those already there who want to learn more about using Python for data science.

We’ll fly by all the essential elements data scientists use while providing links to more thorough explanations. This way, you can skip the stuff you already know and dive right into what you don’t know. Along the way, I’ll guide you to the essential Python packages used by the data science community.

I recommend you bookmark this page to return to it easily. And last but not least: this page is a continuous work in progress. I’ll be adding content and links, and I’d love to get your feedback too. So if you find something you think belongs here along your journey, don’t hesitate to message me.

Table of Contents

What is Data Science?

Before we start, though, I’d like to describe what I see as data science more formally. While I assume you have a general idea of what data science is, it’s still a good idea to define it more specifically. It’ll also help us define a clear learning path.

As you may know, giving a single, all-encompassing definition of a data scientist is hard. If we ask ten people, I’m sure it will result in at least eleven definitions of data science. So here’s my take on it.

Working with data

To be a data scientist means knowing a lot about several areas. But first and foremost, you have to get comfortable with data. What kinds of data are there, how can it be stored, and how can it be retrieved? Is it real-time data or historical data? Can it be queried with SQL? Is it text, images, video, or a combination of these?

How you manage and process your data depends on a number of properties or qualities that allow us to describe it more accurately. These are also called the five V’s of data:

- Volume: how much data is there?

- Velocity: how quickly is the data flowing? What is its timeliness (e.g., is it real-time data?)

- Variety: are there different types and data sources, or just one type?

- Veracity: the data quality; is it complete, is it easy to parse, is it a steady stream?

- Value: at the end of all your processing, what value does the data bring to the table? Think of useful insights for management.

Although you’ll hear about these five V’s more often in the world of data engineering and big data, I strongly believe that they apply to all of the areas of expertise and are a nice way of looking at data.

Programming / scripting

In order to read, process, and store data, you need to have basic programming skills. You don’t need to be a software engineer, and you probably don’t need to know about software design, but you do need a certain level of scripting skills.

There are fantastic libraries and tools out there for data scientists. For many data science jobs, all you need to do is combine the right tools and libraries. However, you need to know one or more programming languages to do so. Python has proven itself to be an ideal language for data science for several reasons:

- It’s easy to learn

- You can use it both interactively and in the form of scripts

- There are (literally) tons of useful libraries out there

There’s a reason the data science community has embraced Python initially. During the past years, however, many new super-useful Python libraries came out specifically for data science.

Math and statistics

As if the above skills aren’t hard enough on their own, you also need a fairly good knowledge of math, statistics, and working scientifically.

Visualization

Eventually, you want to present your results to your team, manager, or world! For that, you’ll need to visualize your results. You need to know about creating basic graphs, pie charts, histograms, and potting data on a map.

Expert knowledge

Each working field has or requires:

- specific terminology,

- its own rules and regulations,

- expert knowledge.

Generally, you’ll need to dive into what makes a field what it is. You can’t analyze data from a specific field of expertise without understanding the basic terminology and rules.

So what is a data scientist?

Coming back to our original question: what is data science? Or: what makes someone a data scientist? You need at least basic skills in all the subject areas named above. Every data scientist will have different levels of these skills. You can be strong in one, and weak in another. That’s OK.

For example, if you come from a math background, you’ll be great at the math part, but perhaps you’ll have a hard time wrestling with the data initially. On the other hand, some data scientists come from the AI/machine learning world and will tend toward that part of the job and less toward other parts. It doesn’t matter too much: ultimately, we all need to learn and fill in the gaps. The differences are what make this field exciting and full of learning opportunities!

Learn Python

The first stop when you want to use Python for Data Science: learning Python. If you’re completely new to Python, start learning the language itself first:

- Start with my free Python tutorial or the premium Python for Beginners course

- Check out our Python learning resources page for books and other useful websites

Learn the command-line

It helps a lot if you are comfortable on the command line. It’s one of those things you have to get started with and get used to. Once you do, you’ll find that you use it more and more since it is so much more efficient than using GUIs for everything. Using the command line will make you a much more versatile computer user, and you’ll quickly discover that some command-line tools can do what would otherwise be a big, ugly script and a full day of work.

The good news: it’s not as hard as you might think. We have a fairly extensive chapter on this site about using the Unix command line, the basic shell commands you need to know, creating shell scripts, and even Bash multiprocessing! I strongly recommend you check it out.

A Data Science Working environment

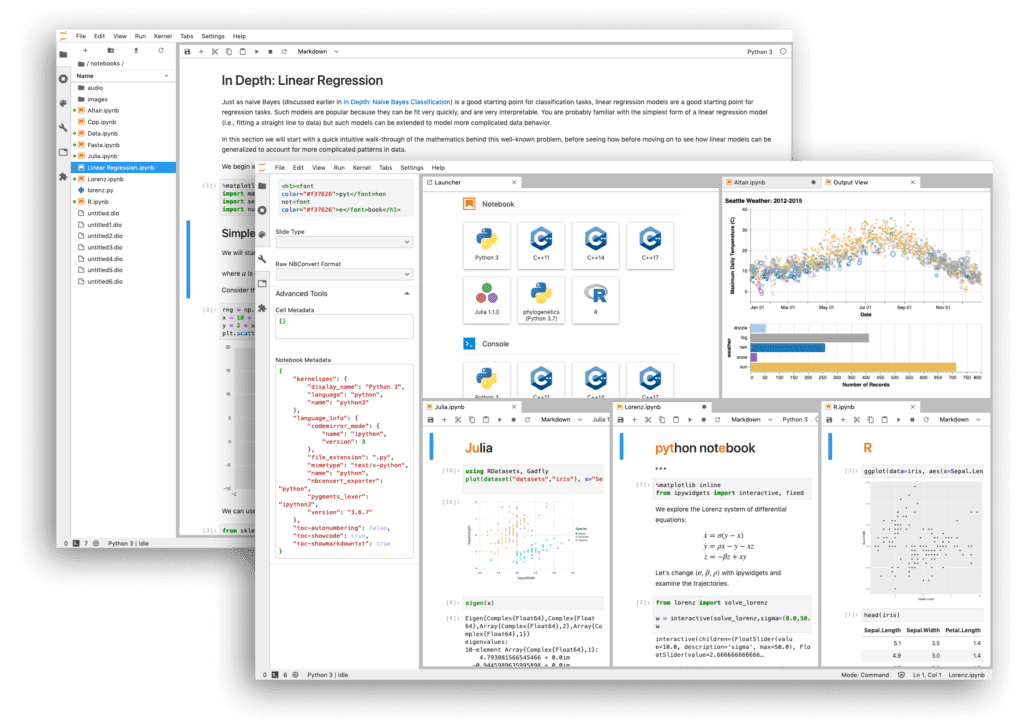

There are roughly two ways of using Python for Data Science:

- Creating and running scripts

- Using an interactive shell, like a REPL or a notebook

Interactive notebooks have become extremely popular within the data science community, but you should certainly not rule out the power of a simple Python script to do some grunt work. Both have their place.

Check out our detailed article about the advantage of Jupyter Notebook. You’ll learn about the advantages of using it for data science, how it works, and how to install it. There, you’ll also learn when a notebook is a right choice and when you’re better off writing a script.

Reading data

There are many ways to get the data you need to analyze. We’ll quickly go over the most common ways of getting data, and I’ll point you to some of the best libraries to get the job done.

Data from local files

Often, the data will be stored on a file system, so you need to be able to open and read files with Python. If the data is formatted in JSON, you need a Python JSON parser. Python can do this natively. If you need to read YAML data, there’s a Python YAML parser as well.

Data from an API

Data will often be offered to you through a REST API. In the world of Python, one of the most used and most user-friendly libraries to fetch data over HTTP is called Requests. With requests, fetching data from an API can be as simple as this:

>>> import requests

>>> data = requests.get('https://some-weather-service.example/api/historic/2020-04-06')

>>> data.json()

[{'ts':'2020-04-06T00:00:00', 'temperature': 12.5, .... }]Code language: Python (python)This is the absolute basic use-case, but requests has you covered too when you need to POST data, when you need to login to an API, etcetera. There will be plenty of examples on the Requests website itself and on sites like StackOverflow.

Scraping data from the World Wide Web

Sometimes, data is not available through an easy-to-parse API but only from a website. If the data is only available from a website, you will need to retrieve it from the raw HTML and JavaScript. Doing this is called scraping, and it can be hard. But like with everything, the Python ecosystem has you covered!

Before you consider scraping data, you need to realize a few things, though:

- A website’s structure can change without notice. There are no guarantees, so your scraper can break at any time.

- Not all websites allow you to scrape them. Some websites will actively try to detect scrapers and block them.

- Even if a website allows scraping (or doesn’t care), you are responsible for doing so in an orderly fashion. It’s not difficult to take down a site with a simple Python script just by making many requests in a short time span. Please realize that you might break the law by doing so. A less extreme outcome is that your IP address will be banned for life on that website (and possibly on other sites as well)

- Most websites offer a robots.txt file. You should respect such a file.

Good scrapers will have options to limit the so-called crawl rate and will have the option to respect robots.txt files too. In theory, you can create your own scraper with, for example, the Requests library, but I strongly recommend against it. It’s a lot of work, and it’s easy to mess up and get banned.

Instead, you should look at Scrapy, which is a mature, easy-to-use library to build a high-quality web scraper.

Crunching data

One of the reasons why Python is so popular for Data Science are the following two libraries:

- NumPy: “The fundamental package for scientific computing with Python.”

- Pandas: “a fast, powerful, flexible, and easy-to-use open-source data analysis and manipulation tool.”

Let’s look at these two in a little more detail!

NumPy

NumPy’s strength lies in working with arrays of data. These can be one-dimensional arrays, multi-dimensional arrays, and matrices. NumPy also offers a lot of mathematical operations that can be applied to these data structures.

NumPy’s core functionality is mostly implemented in C, making it very, very fast compared to regular Python code. Hence, Aas long as you use NumPy arrays and operations, your code can be as fast or faster than someone doing the same operations in a fast and compiled language. You can learn more in my introduction to NumPy.

Pandas

Like NumPy, Pandas offers us ways to work with in-memory data efficiently. Both libraries have an overlap in functionality. An important distinction is that Pandas offers us something called DataFrames. DataFrames are comparable to how a spreadsheet works, and you might know data frames from other languages, like R.

Pandas is the right tool for you when working with tabular data, such as data stored in spreadsheets or databases. pandas will help you to explore, clean, and process your data.

Visualization

Every Python data scientist needs to visualize his or her results at some point, and there are many ways to visualize your work with Python. However, if I were allowed to recommend only one library, it would be a relatively new one: Streamlit.

Streamlit

Streamlit is so powerful that it deserves a separate article to demonstrate what it has to offer. But to summarize: Streamlit allows you to turn any script into a full-blown, interactive web application without the need to know HTML, CSS, and JavaScript. All that with just a few lines of code. It’s truly powerful; go read about Streamlit!

Streamlit uses many well-known packages internally. You can always opt to use those instead, but Streamlit makes using them a lot easier. Another cool feature of Streamlit is that most figures and tables allow you to easily export them to an image or CSV file as well.

Dash

Another more mature product is Dash. Like Streamlit, it allows you to create and host web apps to visualize data quickly. To get an idea of what Dash can do, head to their documentation.

Keep learning

You can read the book ‘Python for Data Science’ by Jake Vanderplas for free right here. The book is from 2016, so it’s a bit dated. For example, at the time, Streamlit didn’t exist. Also, the book explains IPython, which is at the core of what is now Jupyter Notebook. The functionality is mostly the same, so it’s still useful.